Design and Manage Apache Druid with DbSchema

Build a clearer workflow for Apache Druid: reverse engineer existing schemas into interactive ER diagrams, model changes visually, and generate reviewed SQL scripts before deployment.

DbSchema is built for visual modeling, schema documentation, and deployment. Keep an offline model in Git, collaborate across teams, and publish documentation that developers, analysts, and stakeholders can navigate in minutes.

Download DbSchema See Apache Druid Features Download Apache Druid JDBC Driver · All drivers

What happens after you download?

Get to your first Apache Druid schema diagram in minutes. No account, no credit card.

Install in minutes

Download the installer for Windows, macOS, or Linux and launch DbSchema. No signup required.

Connect to Apache Druid or open a sample

Reverse engineer an existing Apache Druid database or open a sample model to explore tables, relationships, and indexes.

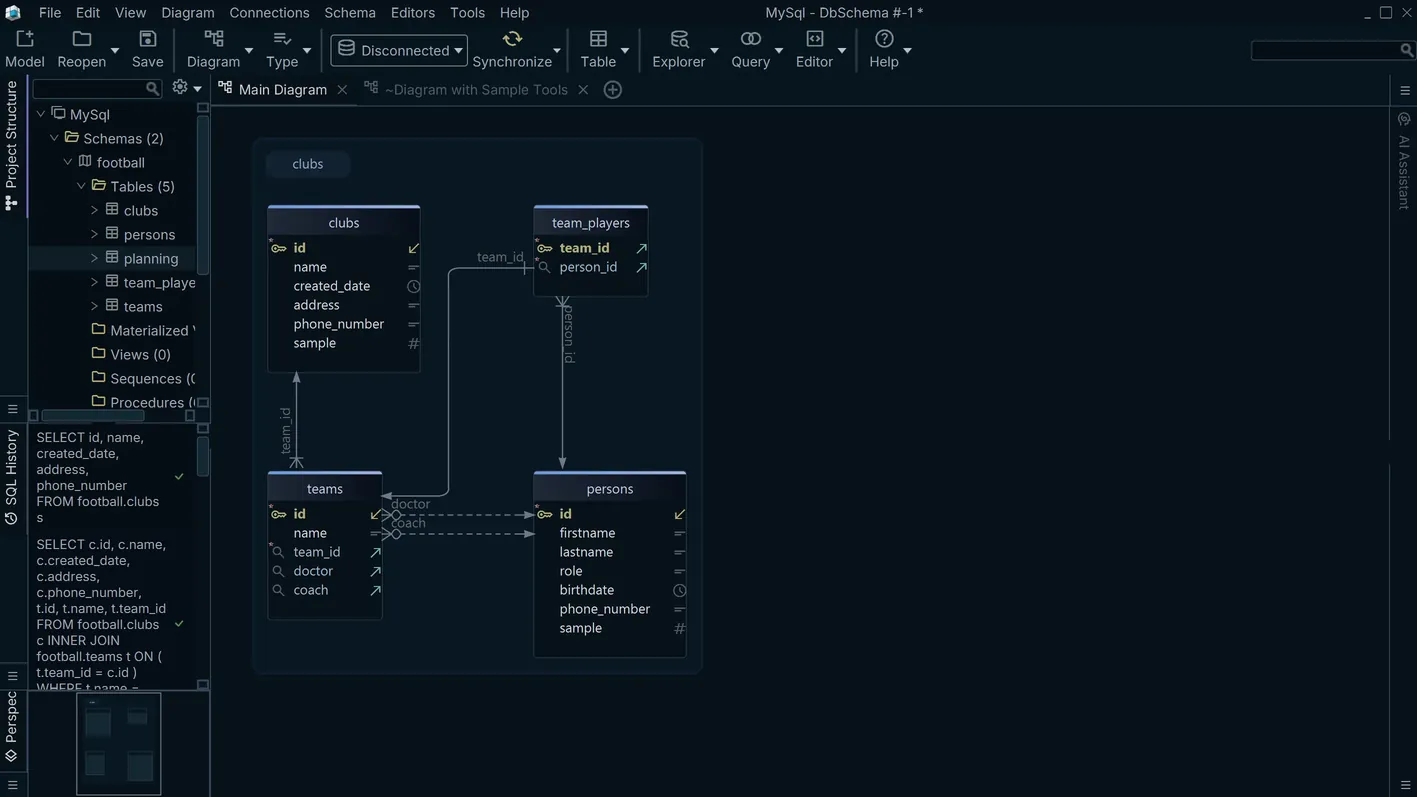

Design, document, and deploy

Edit schema visually, generate documentation, and prepare reviewed migration scripts for safer releases.

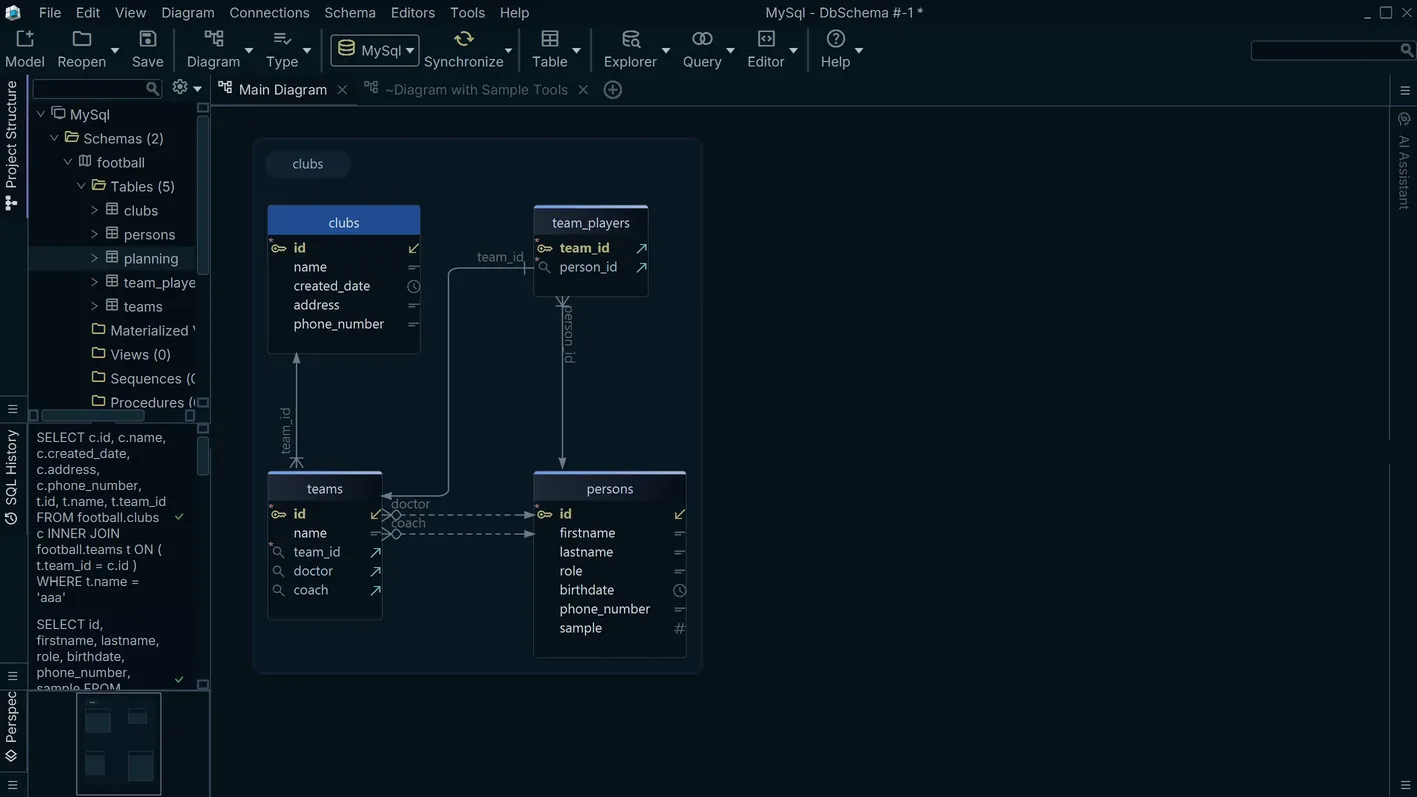

Datasource and Segment Architecture

Apache Druid is a high-performance real-time OLAP database designed for sub-second queries on large event-driven datasets. Rather than traditional tables, Druid organizes data into datasources that are physically partitioned into time-based segments stored in deep storage such as Amazon S3 or HDFS. Each segment holds pre-aggregated rollup data and a columnar layout optimized for scan-heavy analytical workloads. DbSchema connects to Druid through the Apache Calcite Avatica JDBC protocol and introspects datasources as schema objects, rendering them in a visual schema diagram. You can annotate datasources, group them by subject area, and generate documentation that captures the column names, data types, and relationships modeled in your ingestion specs.

Download DbSchema Free See Apache Druid Features

Writing Analytical Druid SQL in the SQL Editor

Druid SQL is a full SQL dialect built on Apache Calcite that supports GROUP BY,

ORDER BY, time-floor functions like TIME_FLOOR(), and approximate aggregations

such as APPROX_COUNT_DISTINCT(). DbSchema's SQL editor connects over the Avatica protocol

and provides auto-completion for datasource and column names, making it straightforward to compose

time-partitioned aggregation queries without memorizing exact column spellings. Results are displayed in

a paginated grid with support for copying rows or exporting to CSV. You can organize saved queries by

datasource and share them across the team by committing the query files to version control alongside

your ingestion specs.

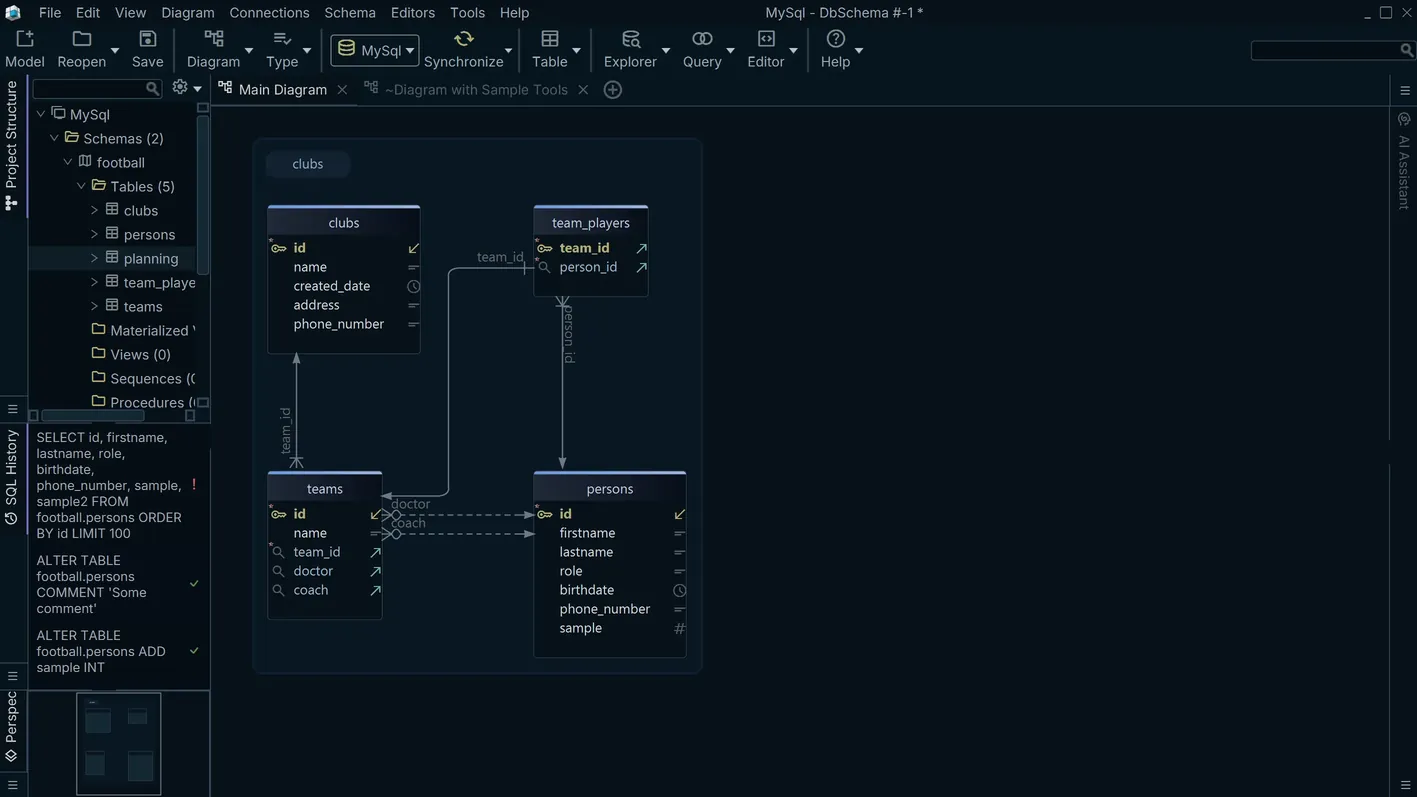

Exploring Ingested Datasets Visually

Once a Druid datasource has been ingested — whether via batch spec or Kafka-based streaming ingestion —

DbSchema's data explorer lets you sample rows, filter by time range or dimension value, and inspect the

exact column types that Druid has inferred during rollup. This is particularly useful for validating that

a new ingestion spec produced the expected schema: you can compare the column list in the data explorer

against the dimensionsSpec and metricsSpec in your ingestion JSON without

leaving DbSchema. The explorer also handles Druid's __time column natively, displaying

timestamps in a human-readable format.

Connection Setup and JDBC URL

DbSchema connects to Apache Druid using the Apache Calcite Avatica remote JDBC driver

(org.apache.calcite.avatica.remote.Driver). The JDBC URL points to Druid's Broker node

and follows the pattern

jdbc:avatica:remote:url=http://broker:8082/druid/v2/sql/avatica/, where

broker is the hostname or IP of your Druid Broker service and 8082 is the

default Druid Broker port. For secured clusters, replace http with https and

supply credentials in the connection dialog. Download the Avatica standalone JDBC JAR from the Apache

Calcite Avatica releases page and register it under DbSchema's driver manager before creating the

connection.

Why Teams Use DbSchema with Apache Druid

- Render Druid datasources as visual schema diagrams to communicate the data model to analysts who are unfamiliar with ingestion specs.

- Write and test complex

TIME_FLOORand approximate aggregation queries in the SQL editor before embedding them in BI tool connections. - Validate ingestion results immediately by sampling datasource rows in the data explorer right after a batch ingestion job completes.

- Document multi-datasource analytics pipelines with annotated schema layouts that can be exported as HTML or PDF.

- Use DbSchema's offline schema model to design Druid datasource schemas before configuring ingestion specs, reducing costly re-ingestion cycles.

- Compare column lists between DbSchema's live schema and your ingestion spec JSON to catch schema drift early.

Related databases

Teams working with Apache Druid often use these engines too. Explore dedicated guides and JDBC setup for each.