Download Databricks JDBC Driver

What Is a JDBC Driver?

A JDBC driver is a Java library file (.jar) that enables Java applications — including DbSchema — to communicate with a database over a standard API. The driver translates generic JDBC calls into the network protocol understood by Databricks, so you never have to write low-level socket code. Drivers are typically distributed by the database vendor or as open-source projects.

Understanding the JDBC URL

Every JDBC driver identifies the target database through a connection URL. The URL encodes the hostname, port, database name, and any driver-specific parameters as a single string. The exact syntax varies per driver — the details for Databricks are listed in the section below.

Download the Databricks JDBC Driver

Databricks is a unified data analytics platform built on Apache Spark, providing a lakehouse architecture that combines the flexibility of data lakes with the reliability of data warehouses. It supports Delta Lake, MLflow, and collaborative notebooks for data engineering and ML workflows.

Databricks JDBC Driver Details

We use the Spark JDBC driver to connect to Databricks SQL warehouses.

- Required File(s): SparkJDBC42.jar

- Java Driver Class: com.simba.spark.jdbc.Driver

- JDBC URL: jdbc:spark://HOST:PORT/default;transportMode=http;ssl=1;httpPath=...

- Website: Databricks

Download Databricks JDBC Driver

The driver archive is a zip file. Extract it and load the .jar files using DbSchema's Driver Manager.

DbSchema and Databricks

DbSchema connects to Databricks SQL warehouses via the Spark JDBC driver and renders Delta Lake table schemas as interactive diagrams. Use the Schema Synchronization feature to compare table definitions across Databricks environments and generate the DDL diff.

Have connection issues? Contact the DbSchema team for help.

Explore Databricks Visually with DbSchema

Once the JDBC driver is configured, DbSchema connects to your Databricks database and gives you a full graphical workbench — no command-line required. Available as a free Community Edition and a full-featured PRO Edition. No registration needed to get started.

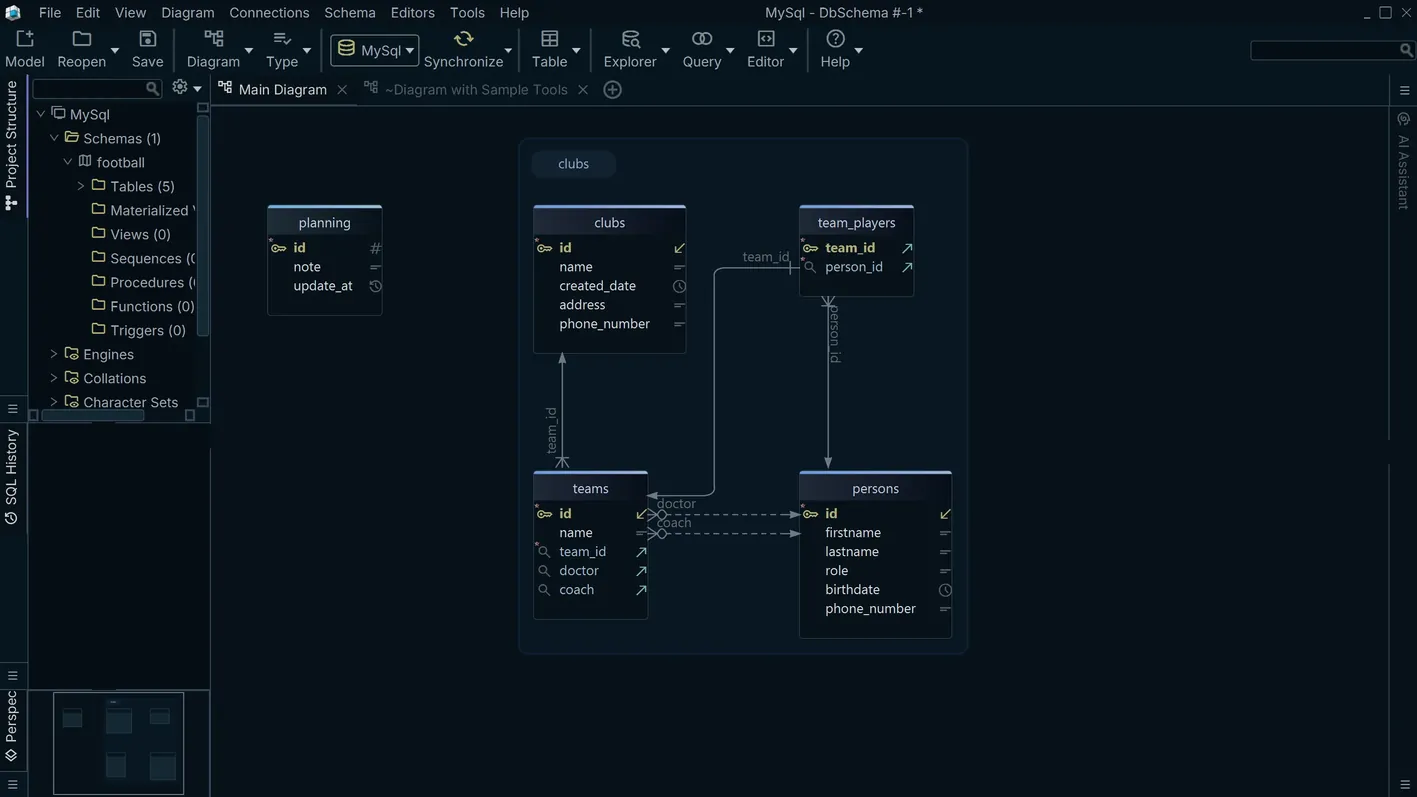

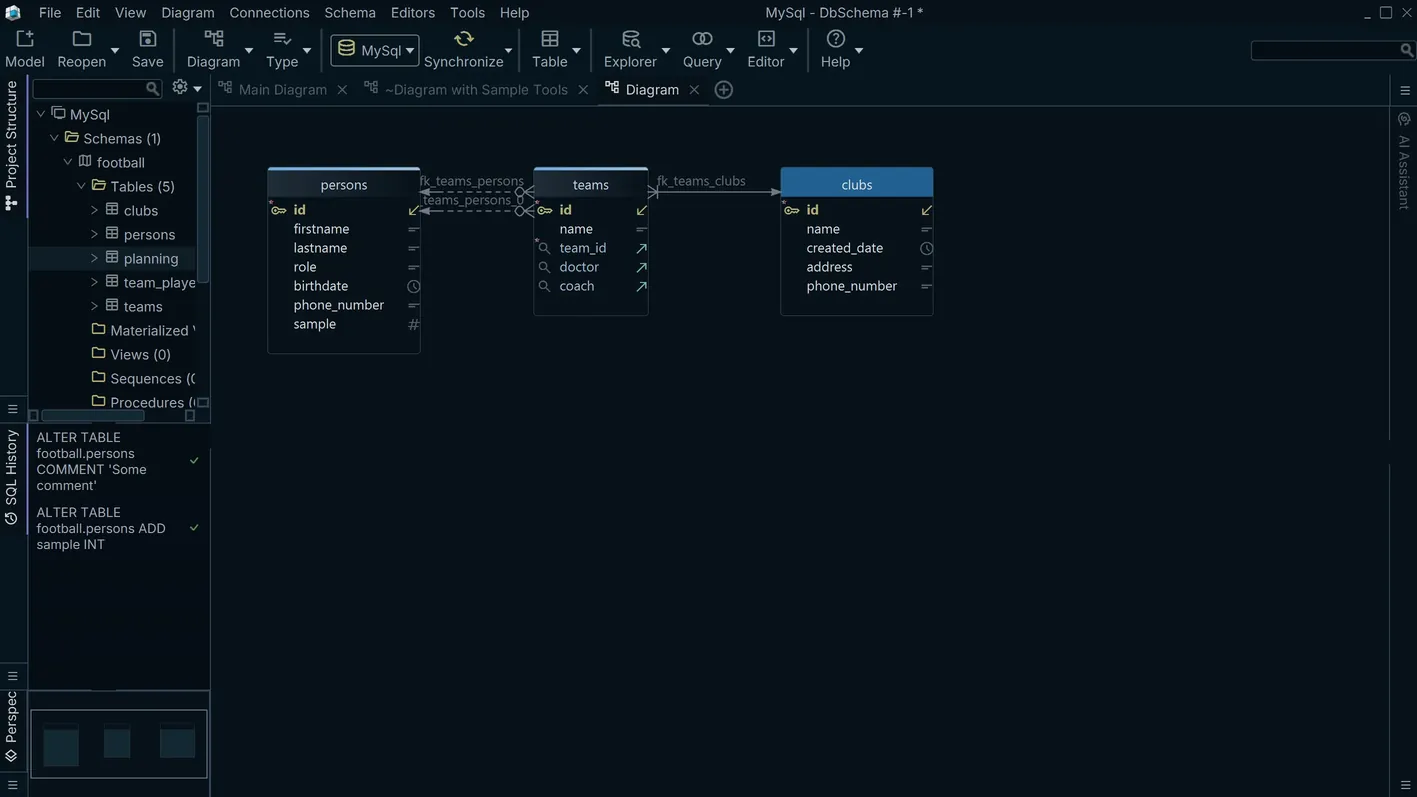

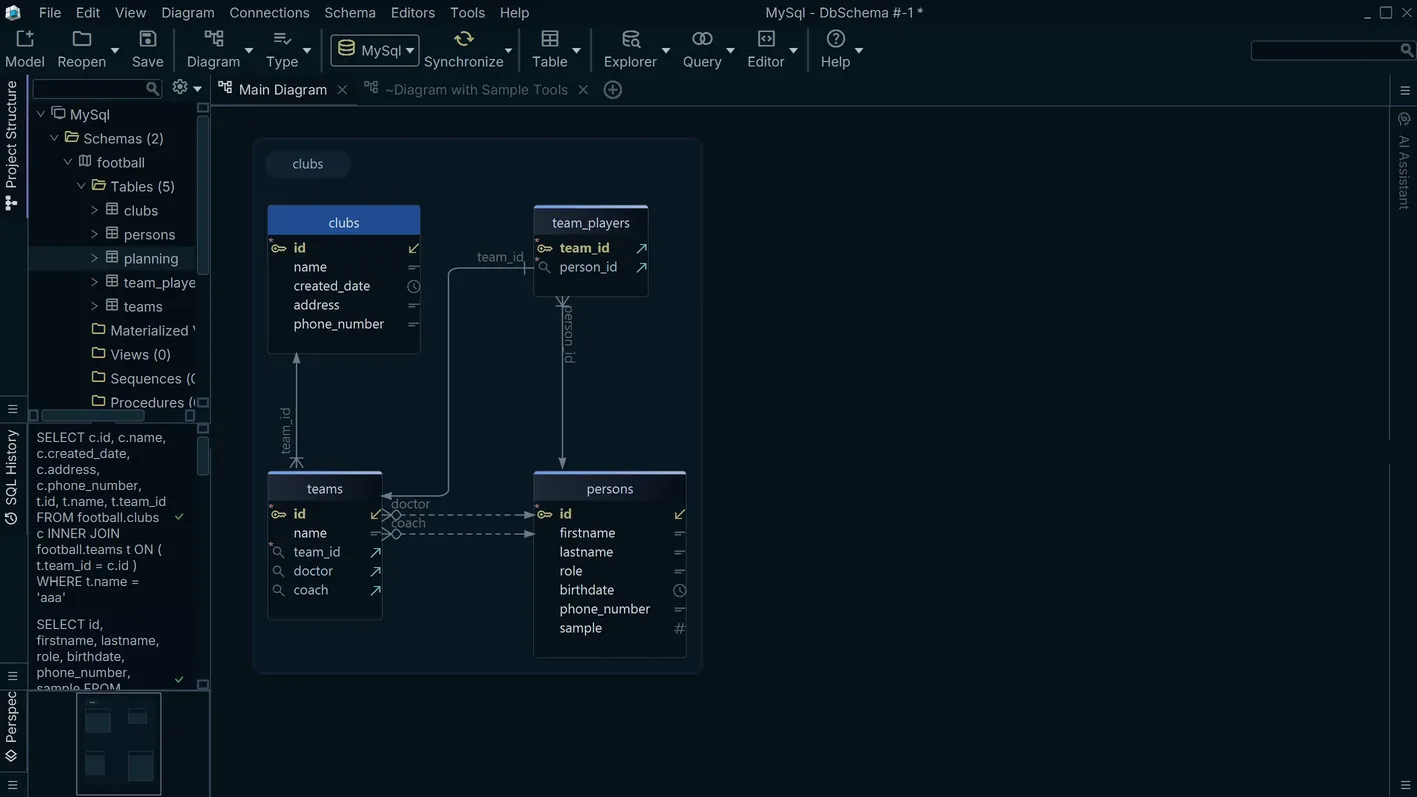

Interactive ER Diagrams

Reverse-engineer your Databricks schema into a drag-and-drop ER diagram. Arrange tables visually, add new columns, define foreign keys, and let DbSchema generate the DDL — all without writing SQL by hand.

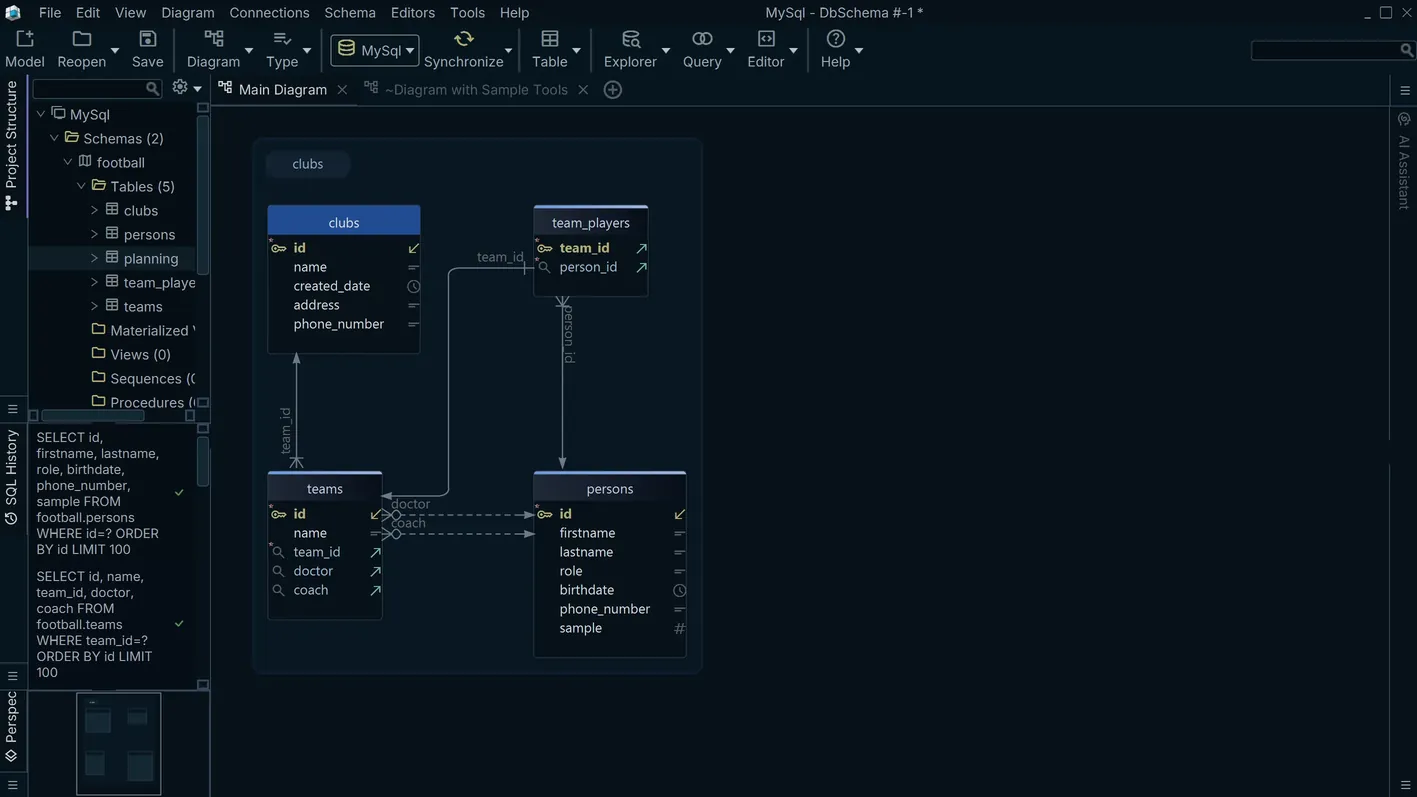

Visual Query Builder

Compose Databricks queries by clicking on tables and columns — no SQL knowledge required. Add joins, filters, groupings, and aggregations through a point-and-click interface, then copy the generated SQL or run it directly against the live database.

Relational Data Explorer

Browse Databricks table data and follow foreign key relationships across tables in a single view. Edit cells inline, filter rows, and paginate through large datasets — all without leaving the explorer.

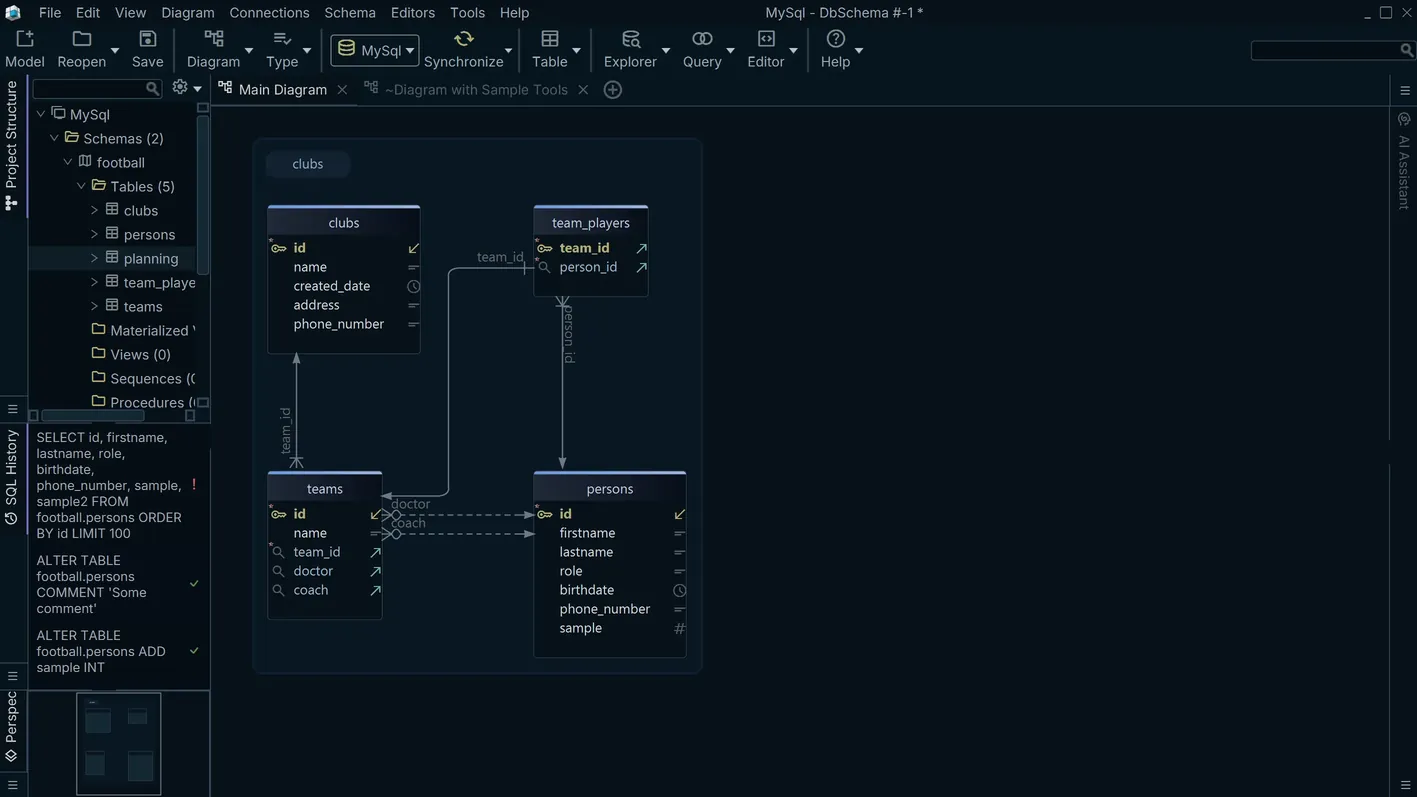

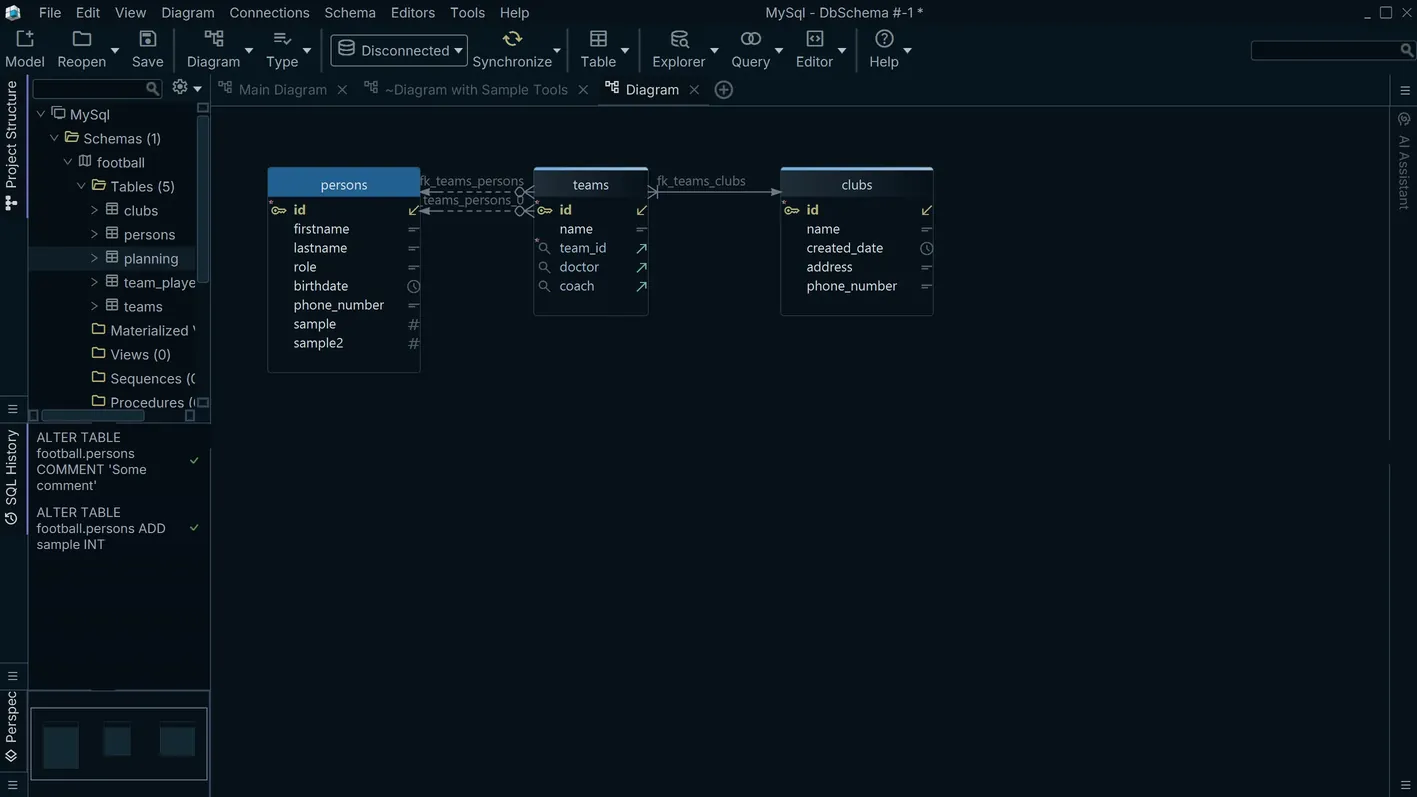

Schema Synchronization

Compare your Databricks schema across development, staging, and production environments. DbSchema generates the exact ALTER statements needed to close the gap and lets you review every change before executing — reducing the risk of unintended schema drift.

SQL Editor

Write and execute Databricks queries in the integrated SQL editor with schema-aware autocomplete, syntax highlighting, and instant result display. Run scripts, inspect execution plans, and export results to CSV or JSON from a single interface.

HTML Schema Documentation

Generate a static HTML site documenting every table, column, type, index, and relationship in your Databricks schema. Share it with your team or embed it in your project wiki — no extra tooling required.

For the full feature list and edition comparison, visit the DbSchema PRO Edition page.