Design and Manage dBASE / DBF Files with DbSchema

Build a clearer workflow for dBASE / DBF Files: reverse engineer existing schemas into interactive ER diagrams, model changes visually, and generate reviewed SQL scripts before deployment.

DbSchema is built for visual modeling, schema documentation, and deployment. Keep an offline model in Git, collaborate across teams, and publish documentation that developers, analysts, and stakeholders can navigate in minutes.

Download DbSchema See dBASE / DBF Files Features Download dBASE / DBF Files JDBC Driver · All drivers

What happens after you download?

Get to your first dBASE / DBF Files schema diagram in minutes. No account, no credit card.

Install in minutes

Download the installer for Windows, macOS, or Linux and launch DbSchema. No signup required.

Connect to dBASE / DBF Files or open a sample

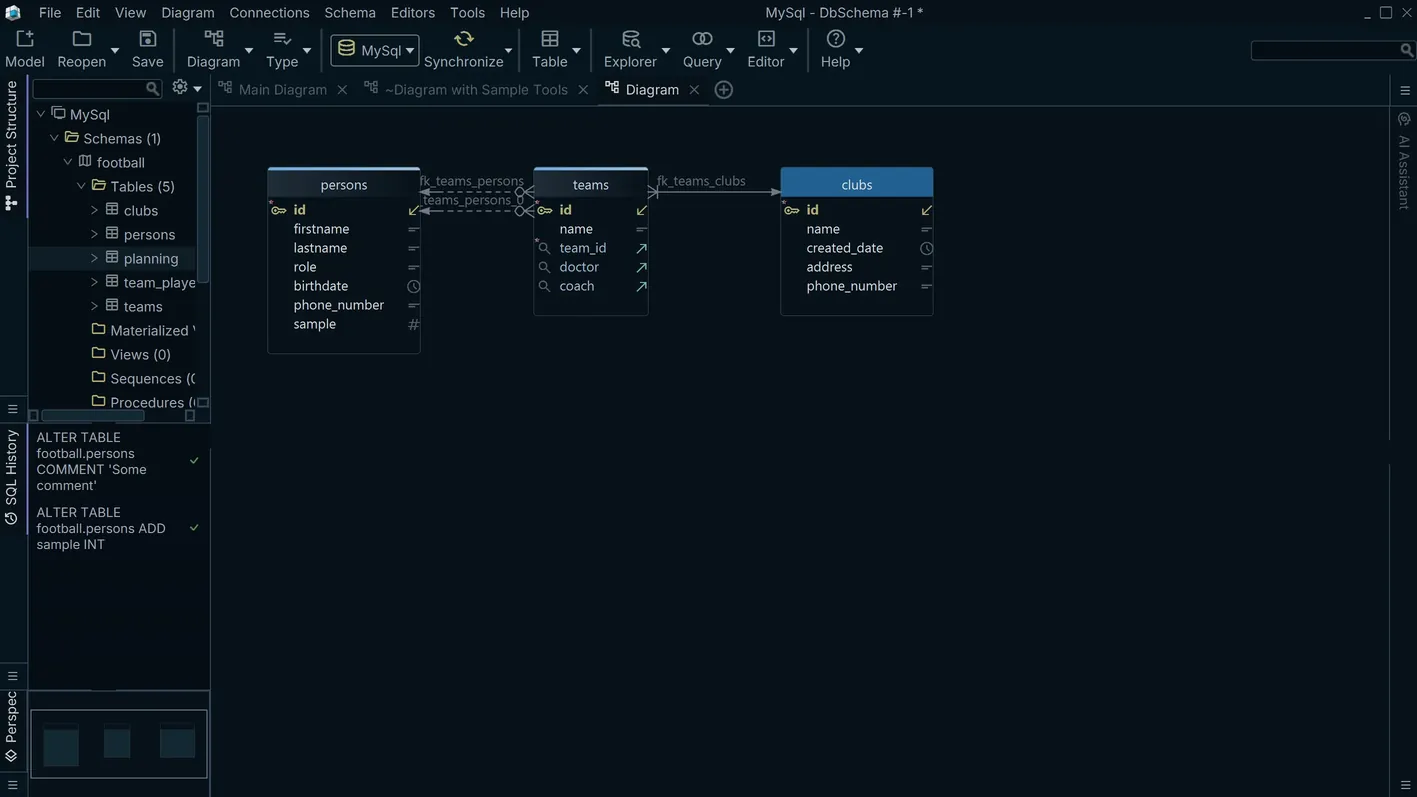

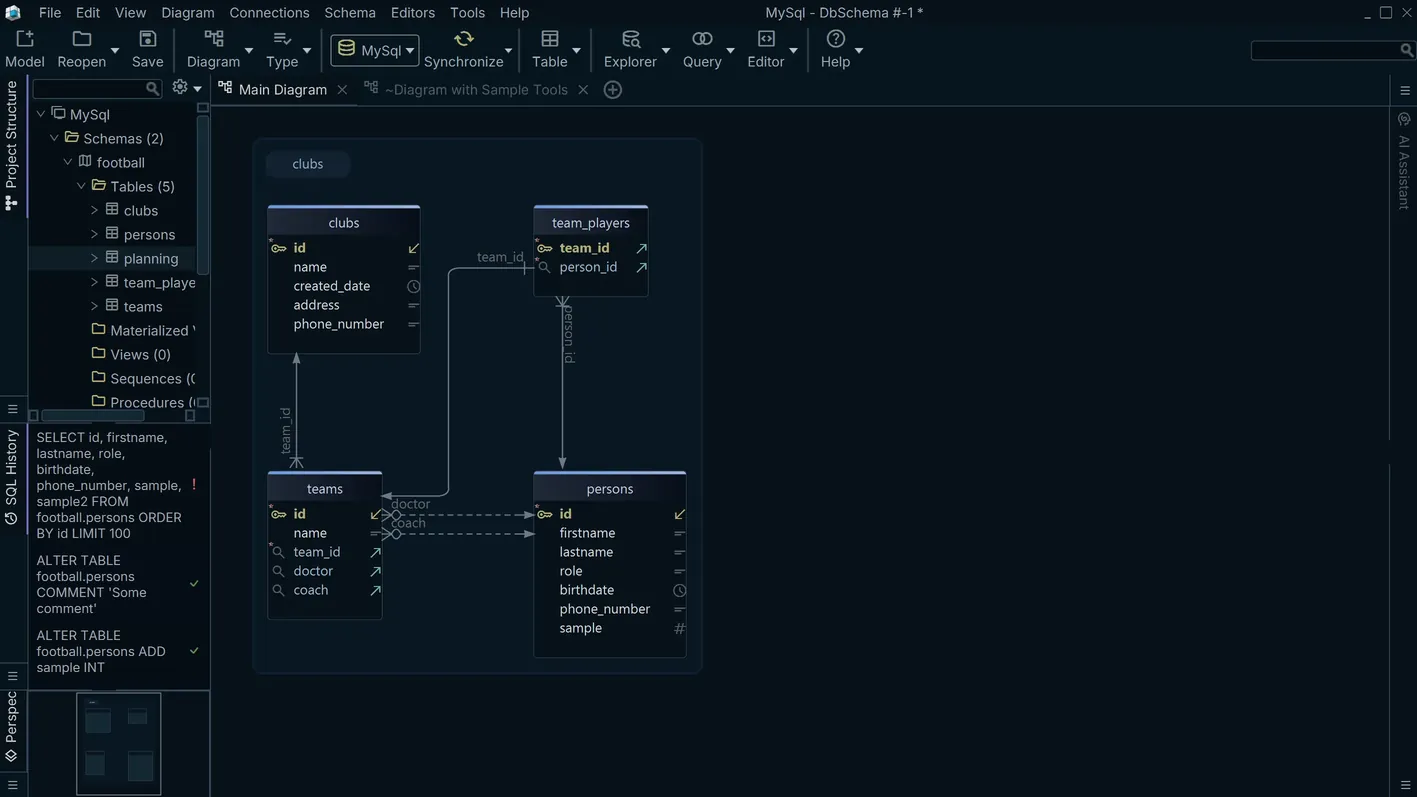

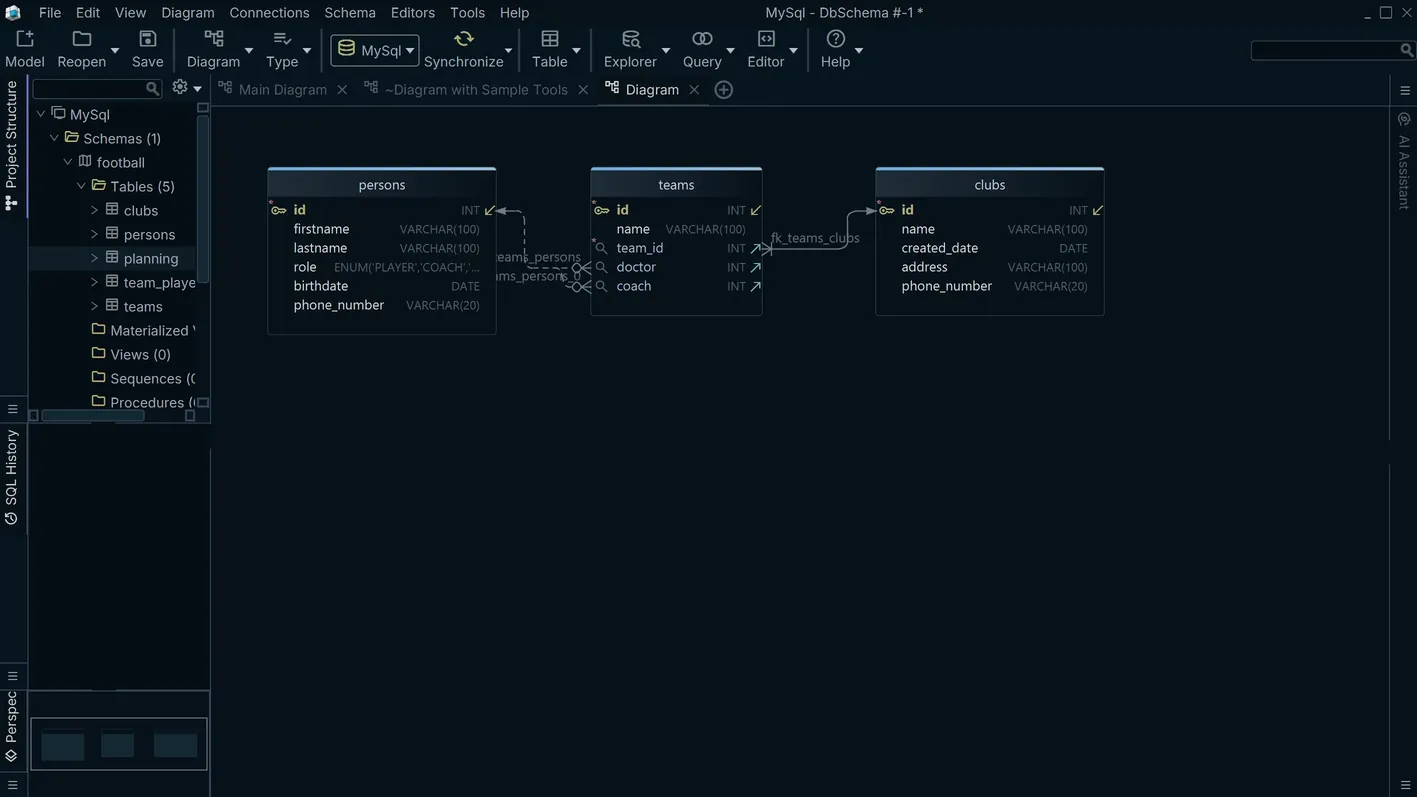

Reverse engineer an existing dBASE / DBF Files database or open a sample model to explore tables, relationships, and indexes.

Design, document, and deploy

Edit schema visually, generate documentation, and prepare reviewed migration scripts for safer releases.

Inspect and Query Legacy DBF Data Files

The DBF format originated with dBASE in the 1980s and was later adopted by FoxPro, Clipper, and Visual FoxPro. Point-of-sale systems, government agencies, and industrial applications still produce or consume DBF files today. DbSchema connects to a directory of DBF files via a JDBC driver, reads field definitions directly from the file headers, and presents the schema in the diagram canvas — giving teams a structured view of legacy data without the original dBASE or FoxPro tooling.

Download DbSchema Free See dBASE / DBF Files Features

Open DBF Files Without Legacy Software

DbSchema connects to a directory of DBF files through a JDBC driver that understands the standard dBASE file format. Field names, types, and widths defined in each file header are loaded into the schema diagram, allowing developers to understand the data model of a legacy application without access to the original development environment.

Browse DBF Table Contents

The data explorer provides row-level access to DBF file contents with column filtering to locate specific records. Use it to verify data integrity before migrating DBF data to a modern relational database, or to answer ad-hoc queries about a legacy dataset using SQL rather than DBF's proprietary query syntax.

Review and Map Field Definitions

DBF field types — Character, Numeric, Date, Logical, and Memo — map to SQL types when accessed through the JDBC layer. DbSchema's table editor lets you review each field's definition and plan the type mapping for a migration to PostgreSQL, MySQL, or another target database.

Connecting DbSchema to DBF Files

Use the xBaseJ or JDBF JDBC driver with the URL jdbc:xbase:/path/to/directory,

where the path points to the directory containing the .dbf files — each file becomes

a table. Download the driver JAR and register it in DbSchema's driver manager. For FoxPro DBF

files that include CDX compound indexes, verify that the driver version supports compound index

files, as some drivers silently skip indexed records when the CDX file is present but unsupported.

Memo fields stored in associated .dbt or .fpt files require a driver

version that handles external memo storage.

DbSchema also provides its own open-source DBF JDBC driver — source code available on

GitHub.

Why DbSchema for DBF Migration Projects

- Read dBASE, FoxPro, and Clipper DBF files without installing any legacy software.

- Run SQL queries across multiple DBF files joined on shared key fields.

- Map DBF field types to SQL target types as part of a structured migration workflow.

- Export schema documentation for compliance reviews and project handovers.

- Browse and validate record-level data before committing migration scripts to production.

Related databases

Teams working with dBASE / DBF Files often use these engines too. Explore dedicated guides and JDBC setup for each.